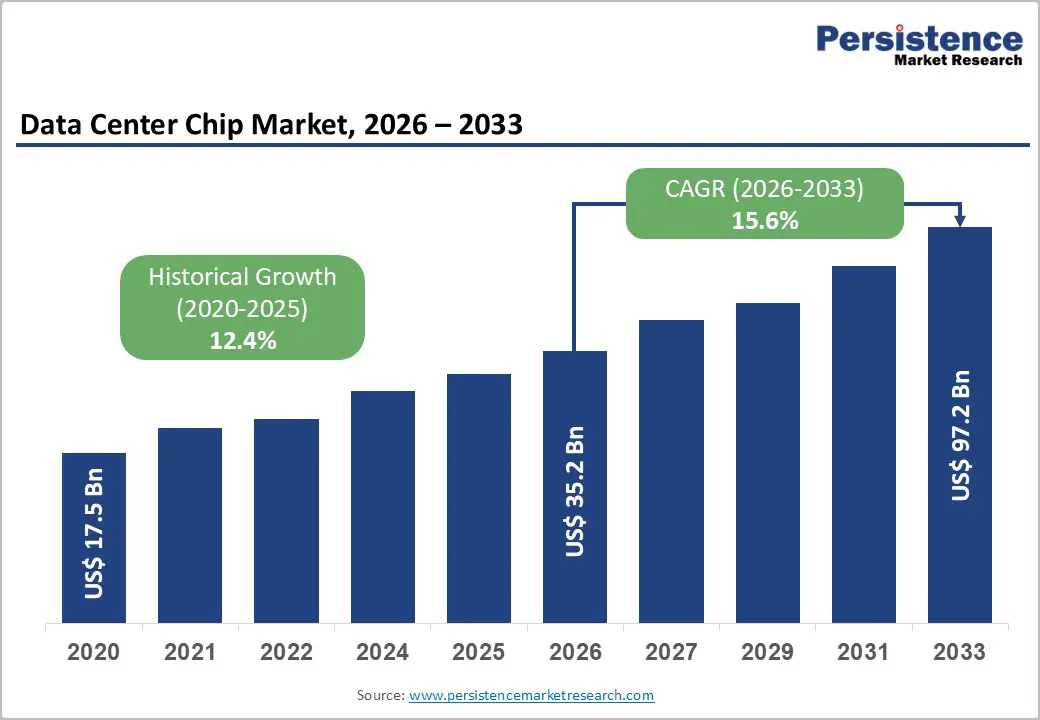

The global data center chip market is entering a transformative phase driven by artificial intelligence, cloud computing expansion, and the increasing demand for high-performance computing infrastructure. The market is projected to grow from US$ 35.2 billion in 2026 to US$ 97.1 billion by 2033, registering a strong CAGR of 15.6% during the forecast period. The rapid adoption of generative AI, machine learning, and large language models (LLMs) is fundamentally changing how enterprises design and scale data centers.

Modern AI applications require massive computational capabilities that traditional processors struggle to provide efficiently. As a result, organizations are increasingly deploying GPUs, AI accelerators, and custom ASICs designed specifically for training and inference workloads. Hyperscalers, governments, and enterprises are investing billions of dollars into next-generation AI infrastructure, creating unprecedented demand for advanced semiconductor technologies.

The market’s growth is further supported by national semiconductor strategies, rising cloud adoption, expanding digital economies, and the proliferation of edge computing environments. With AI becoming a strategic necessity across industries, data center chips are evolving into the backbone of the global digital infrastructure ecosystem.

Rising AI and Large Language Models Fuel Market Expansion

One of the primary growth drivers in the data center chip market is the explosive growth of AI and large language model deployments. AI workloads require parallel processing capabilities capable of handling trillions of operations simultaneously. GPUs have emerged as the preferred architecture for these tasks due to their ability to execute thousands of concurrent mathematical computations efficiently.

In Q4 2024 alone, GPU revenue reached approximately US$ 28.5 billion, accounting for nearly 87.6% of AI data center chipset sales. The widespread adoption of AI applications across enterprises, healthcare, finance, manufacturing, and government institutions continues to accelerate demand for specialized compute hardware.

The launch of advanced AI architectures such as NVIDIA’s Blackwell platform has significantly increased inference performance while reducing operational costs. These innovations are enabling enterprises to train larger models and deploy AI services at scale. Additionally, inferencing workloads are growing rapidly as businesses integrate AI into everyday applications such as customer support, cybersecurity, analytics, and automation.

As generative AI adoption expands globally, the need for advanced chips with higher memory bandwidth, lower latency, and better energy efficiency will continue to rise.

Government Investments Strengthen Semiconductor Infrastructure

Government-backed semiconductor initiatives are playing a critical role in strengthening the data center chip ecosystem. Countries worldwide now view semiconductor independence as a strategic economic and national security priority.

The United States CHIPS and Science Act allocated US$ 52.7 billion for semiconductor manufacturing and research. Major technology companies including Intel, TSMC, Samsung, and Micron Technology received substantial grants to expand advanced chip fabrication facilities in the United States.

Similarly, the European Chips Act committed approximately €43 billion toward strengthening Europe’s semiconductor capabilities. Governments across Asia Pacific are also investing heavily in domestic chip manufacturing and AI infrastructure expansion.

These large-scale policy initiatives reduce supply chain vulnerabilities, encourage local production, and create long-term stability for semiconductor investments. They also support innovation in advanced packaging, memory technologies, and AI accelerator development.

At the same time, hyperscalers such as Microsoft, Amazon, Google, and Meta are investing billions into AI-ready data centers. Massive infrastructure initiatives like the Stargate project further reinforce the long-term growth outlook for the market.

GPUs Continue to Dominate the Market

Graphics Processing Units (GPUs) currently represent the dominant segment in the data center chip market, accounting for approximately 57% of component revenue in 2026. Their massively parallel architecture makes them ideal for AI model training, scientific computing, and real-time analytics.

NVIDIA continues to lead the AI accelerator landscape with approximately 85% market share, supported by its CUDA software ecosystem and advanced GPU architectures. AMD has also strengthened its position through its Instinct series accelerators, while Intel is aggressively expanding its AI processor portfolio.

The dominance of GPUs is closely tied to the rapid expansion of AI applications. Neural network training requires enormous matrix multiplication operations that CPUs alone cannot handle efficiently. As AI models become larger and more complex, demand for high-performance GPUs is expected to remain exceptionally strong.

Beyond AI, GPUs are increasingly used in cloud gaming, digital twins, simulation environments, and autonomous systems, further broadening their commercial applications.

AI ASICs Emerging as the Fastest-Growing Segment

While GPUs dominate current deployments, AI-specific Application-Specific Integrated Circuits (ASICs) are becoming the fastest-growing component category. Hyperscalers are increasingly designing proprietary chips optimized for their unique workloads.

Google’s Tensor Processing Units (TPUs), Amazon’s Trainium and Inferentia chips, Microsoft’s Maia processors, and Meta’s MTIA accelerators illustrate the growing trend toward custom silicon development. These chips are designed to improve efficiency, reduce power consumption, and lower inference costs at hyperscale.

According to industry forecasts, AI ASIC revenue could reach US$ 84.5 billion by 2030. This creates major opportunities for semiconductor design firms, foundries, packaging companies, and electronic design automation providers.

Custom ASICs also enable organizations to reduce dependence on third-party suppliers while improving performance optimization for specialized AI workloads. As AI adoption becomes more widespread, proprietary silicon strategies are expected to become a major competitive differentiator among hyperscalers.

HBM Supply Constraints Remain a Major Challenge

Despite strong market growth, supply chain bottlenecks continue to challenge the industry. High Bandwidth Memory (HBM), an essential component in AI accelerators, remains in limited supply due to surging demand.

Samsung and SK Hynix dominate global HBM production and are aggressively expanding capacity. However, AI deployment growth is occurring faster than supply expansion, resulting in extended lead times and pricing pressures.

Advanced packaging technologies such as TSMC’s CoWoS packaging also represent a significant bottleneck. These technologies are essential for integrating GPUs, memory stacks, and AI accelerators into high-performance systems.

The combination of HBM shortages and packaging constraints may temporarily slow AI infrastructure deployments and moderate short-term revenue growth. Nevertheless, ongoing investments in fabrication capacity and advanced manufacturing are expected to gradually alleviate these challenges over the forecast period.

Healthcare and BFSI Create Long-Term Demand Opportunities

Healthcare and banking sectors are emerging as highly valuable verticals for data center chip adoption. These industries require real-time analytics, secure processing environments, and regulatory compliance, making high-performance computing infrastructure essential.

In healthcare, AI-powered diagnostics, genomics sequencing, precision medicine, and telemedicine applications require substantial computational resources. Healthcare IT spending is projected to exceed US$ 390 billion by 2030, creating durable demand for specialized AI chips.

The banking, financial services, and insurance (BFSI) sector is also adopting AI at a rapid pace. Financial institutions increasingly rely on AI for fraud detection, risk analysis, high-frequency trading, and payment analytics.

Additionally, central banks worldwide are deploying AI systems to improve regulatory oversight and transaction monitoring. These mission-critical applications require deterministic latency and secure computing environments, further strengthening demand for advanced data center chips.

Large Data Centers Lead Infrastructure Deployments

Large hyperscale data centers account for approximately 72% of market revenue due to the immense computational requirements of AI workloads. Frontier AI models require tightly connected GPU clusters operating with ultra-low latency interconnects.

Hyperscalers are investing heavily in gigawatt-scale campuses capable of supporting thousands of GPUs and AI accelerators. These facilities form the backbone of cloud AI services, enterprise AI platforms, and large-scale model training operations.

However, small and medium-sized data centers are also experiencing rapid growth due to edge AI applications. Industries such as retail, healthcare, and manufacturing increasingly require localized AI processing to reduce latency and comply with data sovereignty regulations.

Edge data centers are becoming especially important for real-time analytics, autonomous systems, and industrial automation applications.

North America Maintains Market Leadership

North America remains the leading regional market due to its concentration of semiconductor companies, hyperscalers, and AI research institutions. The United States accounts for a substantial share of global semiconductor revenue and hosts major industry leaders including NVIDIA, AMD, Intel, Broadcom, and Micron Technology.

The region benefits from strong venture capital investment, advanced research ecosystems, and supportive government policies. Massive cloud infrastructure spending by AWS, Microsoft Azure, and Google Cloud continues to drive strong demand for data center chips.

The US semiconductor ecosystem also maintains significant advantages in chip design, AI software frameworks, and advanced manufacturing partnerships.

Asia Pacific Emerges as the Fastest-Growing Region

Asia Pacific is rapidly becoming the fastest-growing region in the data center chip market. The region combines strong semiconductor manufacturing capabilities with accelerating AI infrastructure investments.

South Korea remains critical to the global AI supply chain through Samsung and SK Hynix’s dominance in HBM production. China continues to invest aggressively in domestic semiconductor development and AI self-sufficiency initiatives.

India is also emerging as a major growth market driven by digital transformation, rising cloud adoption, and expanding AI infrastructure investments. Southeast Asian countries such as Singapore, Malaysia, Indonesia, and Thailand are attracting significant hyperscale data center investments due to favorable regulatory environments and strategic geographic positioning.

As AI adoption accelerates across Asia Pacific, the region is expected to play an increasingly important role in global semiconductor demand and manufacturing expansion.

Competitive Landscape Intensifies

The competitive landscape of the data center chip market is becoming increasingly dynamic. NVIDIA remains the dominant player in AI accelerators, but competition is intensifying from AMD, Intel, Broadcom, and hyperscaler-developed ASICs.

Companies are focusing heavily on software ecosystem development, advanced packaging, photonic interconnects, and liquid cooling compatibility to improve performance and energy efficiency.

Strategic partnerships between chip designers, foundries, cloud providers, and memory manufacturers are becoming increasingly important for maintaining technological leadership.

Emerging technologies such as optical computing, chiplet architectures, and energy-efficient AI accelerators are expected to shape the next phase of market evolution.

Conclusion

The global data center chip market is entering a period of extraordinary expansion fueled by AI transformation, hyperscale infrastructure investments, and government-backed semiconductor initiatives. As enterprises increasingly rely on AI-driven applications, the need for specialized high-performance chips will continue to accelerate.

GPUs currently dominate the market, but AI ASICs are rapidly emerging as a critical growth segment. While supply chain constraints around HBM and advanced packaging remain challenges, ongoing investments in manufacturing capacity are expected to improve long-term market stability.

North America continues to lead the industry, while Asia Pacific is becoming the fastest-growing regional market. Healthcare, BFSI, telecom, and cloud computing sectors are expected to remain major demand contributors throughout the forecast period.

With the market projected to reach US$ 97.1 billion by 2033, data center chips are poised to become one of the most strategically important segments of the global semiconductor industry.